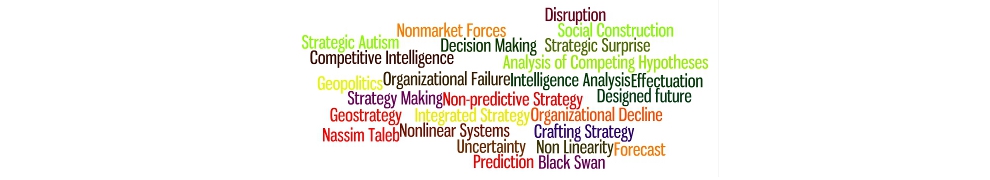

Philip Tetlock and his team have just released an interesting article entitled “The Psychology of Intelligence Analysis: Drivers of Prediction Accuracy in World Politics” in the Journal of Experimental Psychology: Applied (January 12, 2015). Their article summarizes the findings of the Intelligence Advanced Research Projects Activity (IARPA) tournament that we drew our readers attention to in 2013. If you’re interested in intelligence analysis, forecasting or geopolitics, the article is certainly worth your time. Nevertheless, we have our differences with Messrs Tetlock et al.

Philip Tetlock and his team have just released an interesting article entitled “The Psychology of Intelligence Analysis: Drivers of Prediction Accuracy in World Politics” in the Journal of Experimental Psychology: Applied (January 12, 2015). Their article summarizes the findings of the Intelligence Advanced Research Projects Activity (IARPA) tournament that we drew our readers attention to in 2013. If you’re interested in intelligence analysis, forecasting or geopolitics, the article is certainly worth your time. Nevertheless, we have our differences with Messrs Tetlock et al.

Some of the article’s conclusions are part of the received wisdom of forecasting. For example, they conclude, “We developed a profile of the best forecasters; they were better at inductive reasoning, pattern detection, cognitive flexibility, and open-mindedness. They had greater understanding of geopolitics, training in probabilistic reasoning, and opportunities to succeed in cognitively enriched team environments. Last but not least, they viewed forecasting as a skill that required deliberate practice, sustained effort, and constant monitoring of current affairs.” (Hurrah, and here’s to Open Sources! The important drivers of geopolitics are not remotely secret.) While these conclusions might sound intuitive, it is useful to document that they stand up to sustained scrutiny in a controlled experiment.

Some of Tetlock and his teams’ other conclusions also jibe with our (more sociological) approach to understanding the challenges of forecasting. Among other things, they find that when it comes to anticipating major geopolitical events, teams outperform individuals, and laymen can be trained to be effective analysts using only open sources.

The publication of this article, however, is also an excellent occasion to remind people of the shortcomings of a psychological approach to understanding success and failure in intelligence and geopolitical analysis. As we explore in Constructing Cassandra, purely psychological approaches present intermediate-level theories: they do not necessarily conflict with – but also do not entirely transcend – competing approaches to the problem (such as those presented by studies of organizational behavior or discussions of the “politicization” of intelligence).

Moreover, while the new paper certainly analyses the role of collective dynamics of the processing of information (which is a huge step forward when compared to simple “psychological biases” work), without an underpinning in the sociology of knowledge, some key root questions about intelligence analysis are left addressed: e.g. Exactly which questions are asked, by whom, in response to what, and why; as you seek to answer them, who gets ignored, when and why? Which questions are simply rejected? How and why does that happen?

As Wohlstetter wrote in 1962, “The job of lifting signals out of a confusion of noise is an activity that is very much aided by hypotheses.” As I discussed last May at the Spy Museum in Washington, that remains true in today’s “Big Data” environment, and Tetlock’s experiments are a worthy attempt to determine who individually and collectively most effectively does that “lifting”, or sorting, of signals from noise.

One more failure of imagination…

BUT, what the IARPA work and Tetlock’s experiments do not address is the root cause of surprise, which in our view is the “problem of the wrong puzzle” or in Intelligence, bad Tasking (AKA “failures of imagination,”, that phrase so beloved of the 9/11 Commission which is now often wheeled out as a deus ex machina after a surprise has occurred).

In contrast, we believe the question of Tasking is vital, and that the systematic and sustained study of “Cassandras” – those who give warning but are ignored – are interesting exactly because their imaginations don’t fail yet for reasons that extend well beyond the merely psychological, their warnings (which should result in Tasking or further analysis) are ignored. In other words, given a particular set of questions, who answers them best is quite interesting. More interesting, however, is what questions are not being asked, and who’s excluded from the debate. These dilemmas Tetlock’s work does not directly address, but we think the answers lie in the realm of the culture and identity of the organization performing the analysis.

Until the role that the culture and identity of analytic teams and intelligence agencies as a whole is systematically address, we will have more strategic surprises than necessary. The beginnings of a cure for any problem is a sound diagnosis. Our diagnosis is that the core challenges of intelligence analysis are socially constructed. In short, our hats are off to Dr. Tetlock and his team, but they need to dig deeper!

Naturally, we would welcome your comments on the IARPA research or Constructing Cassandra, and if you enjoyed this blog post, why not subscribe?