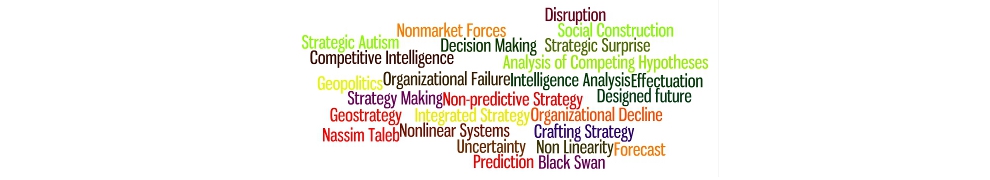

In the first part of this series, Milo and I examined the complexity of nonlinear environments and tried to show how, when confronted with such an environment, energy spent on a deep understanding of the present beats attempts at predicting the future. Hence our call for a non-predictive approach to strategy.

Nonlinear systems can be found in nature, but they are particularly common and problematic when they involve human issues. While such human nonlinear systems can display regularities over long time periods, most major political, economic and business issues are essentially nonlinear and permeated by social facts. What such human-centered, nonlinear systems have in common but which is often overlooked is that one cannot deal with them as if they were natural science problems. For one thing, and as we have argued in a recent Forbes article with the example of Usama bin Ladin, how you define the issue you’re dealing with depends on who you are. This is also the reason that “genius” fails.